Intro

It's been almost two months since we launched Data Platform Generator, and we're already at V2. During this time, we received over a hundred of feedbacks as comments, opinions, and recommendations from friends, colleagues, partners, vendors, and the data community, which encouraged us to continue with this conceptual and fun project. A big "Thank You!" to everyone who has interacted with us so far and those who visited the site and found inspiration among the published data solution blueprints.

Inspiration, NOT recommendation, was the main idea when we launched this site and described it here. We want to expose data professionals to different technologies on the market, which could be an alternative to exiting better-known technologies from established vendors and help them with valuable information when designing a modern data stack.

We can group the feedbacks we received so far into three user categories:

-

"The Confused" – Which didn't get along, at least at the first look, where we're going with this project.

"What is the business model?"

"What do we sell here?"We love brutal and honest feedback, and we made sure to give additional explanations to these users to clarify some of their questions.

First, Data Platform Generator is not a commercial offering, and there is no monetization around it. Second, it is a concept site and a fun project that kept up busy many evenings and nights, even on New Year's eve day.

- "The In-betweeners" – It's not bad, but it's not good either; it's… in-between! The most tricky category of users and the most demanding as well. Excellent recommendation thought—more in the FAQ section below.

-

"Enthusiasts"

"We love the site and the idea!"

"We play with it in our weekly team meeting and had fun!"

"I didn't know all these tools existed until I saw your site!"

"Keep up the good work!"My favorite and the most impactful feedback so far:

"We are actively looking for a Data Cataloging solution in the market, and a friend recommended your site. We had a meeting with our architects, looked over your site, and ended up with a list of vendors at the end of it. We saved a lot of hours versus searching for this information on Google."

Hey, I sense some business opportunities here!

We extracted and populated our development backlog with user stories from this feedback. Overall it looks like our initial idea of having a simple site, a "Google-like search engine" for data solution blueprints, may go beyond that in the next interations.

Users expect more functionalities, interactions, and information, basically, a new experience. They want a visual tool to build their data platform, not just a generator, the option to choose a data tool in using different filters and parameters (e.g., data volume, native integration, cloud vs. on-premise, non-functional requirements, industry, budget, use-cases, architectural patterns, and the list go on). We also need to define a new scope and plan to implement these changes.

Will this project remain at the concept level, or will it become a business offering?

Changes

Let's focus on the main changes in the V2:

Design

The design was the most significant issue with V1, from the many complaints we received from our users. We also knew from the start that our site is not a mobile-first design, a big mistake, which probably led to visitors skipping the site after a few seconds. It was the first reason to speed up with the V2 release.

V1 was built with Bubble, a No-Code (or Low-Code) application that helped us quickly design the interface. It was our first experiment with such technology. The learning curve was decent – for two experienced programmers. Still, we realized in the development process that this tool is limited in the functionalities we wanted to implement on our site.

We decided to keep the simple design and use Bootstrap – for the mobile-first design and JavaScript – for the logic and interactivity to implement the new version of the site. We are located in Luxembourg, so we decided to go with Hetzner for hosting. They have servers in Germany, Finland, and the USA (where the site is hosted), an easy administration interface, and excellent service support.

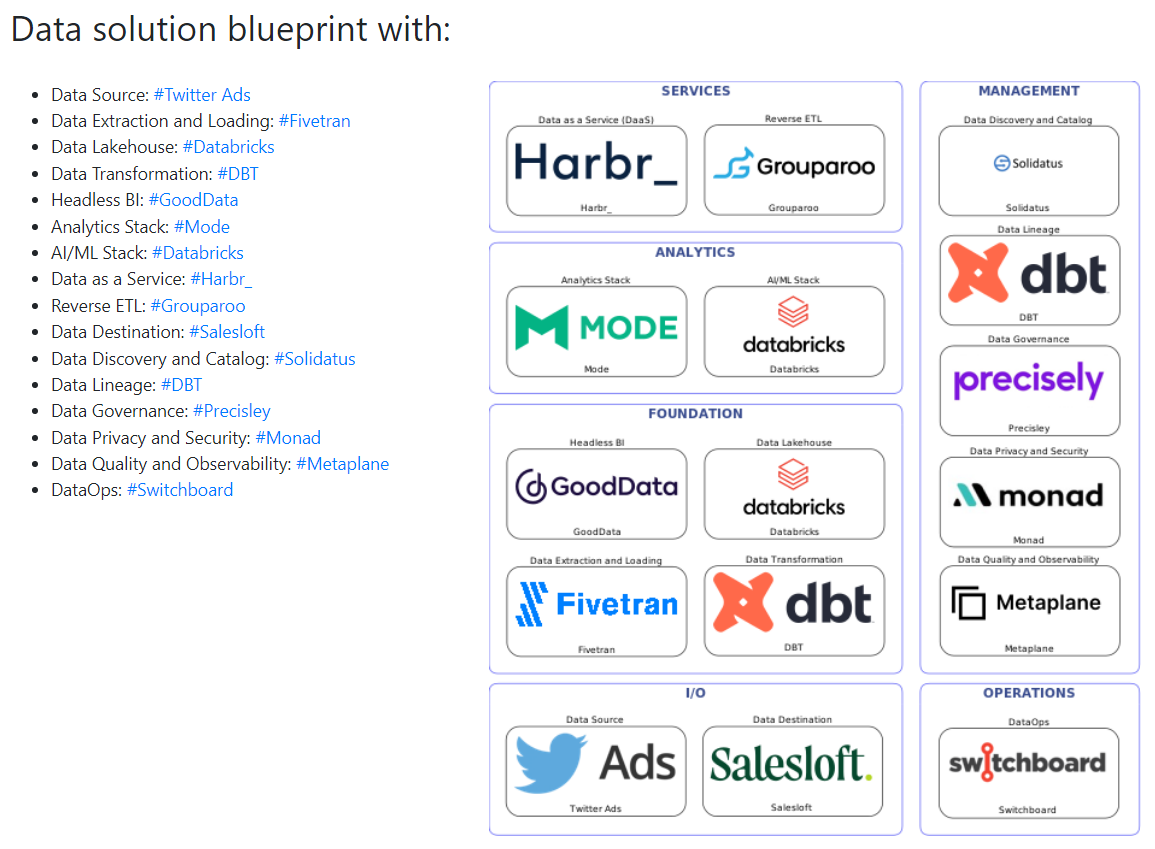

We now have a "PowerPoint view" on the view page that can be selected with your favorite snipping tool and added to your presentation document. We got this suggestion from a user, so again a big "Thank you!" from our hearts.

On this page, you can also add your custom tags, give your rating, share the data solution blueprint with your colleagues and friends, and add your comments.

Your input counts a lot in the site's dynamic and user interactions, as some are looking for more than inspiration. They are looking for advice, lessons learned, best practices, and a discussion point. We sincerely appreciate this effort on your part.

Number of tools

Initially, we had 20+ tools, but now we have 500+ and still counting. Practically, we can generate unlimited combinations till the end of time. We estimated in Wolfram and ended up with an astronomical number that is hard to pronounce even by specialists.

Why so many tools? – A user commented:

"You'll work with maybe 2% of those tools."Well, because we have a lot of tools in the market nowadays, this number will increase in the following years.

More than twenty years ago, when I started my career in Data & Analytics, it was pretty simple: You had 3-4 database engines as candidates for your Data Warehouse, 3-4 ETL tools, and 3-4 BI tools, and that's it. End of story. And even so, most of the implementations failed…, but this is another story for other times.

Today we have hundreds and even thousands of tools that practically make it impossible to learn, test, and use each one of them. Choosing the right one for your use case becomes challenging, as the differences between similar capabilities are shallow.

Search

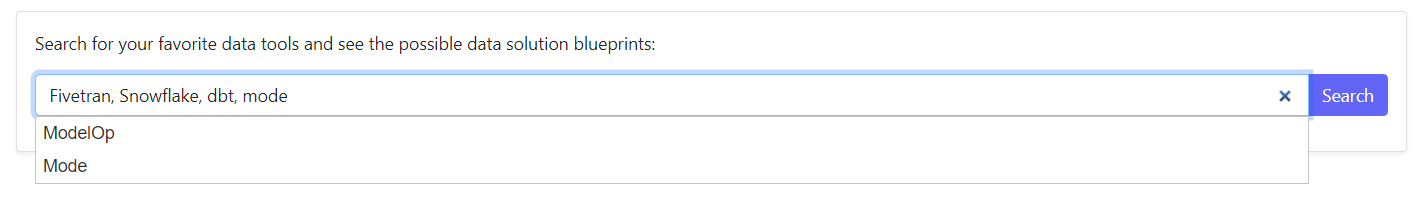

After the re-design, the Seach function was the second priority for us. Users requested it to filter out tools by a vendor or a combination of vendors (e.g., Fivetran, Snowflake, dbt, Mode).

An example as a result of this search is below:

By default, we generate 50 data solution blueprints ( 5 x 10 pages) when you search, but you can search again on the same filter/filters if you need more to see. The probability of having the same blueprints is very, very low. We cannot generate more for now, as we have limited hardware capabilities. Also, we want our users to have a pleasant experience when browsing our site.

Blueprint

Initially, we had three blueprint designs for the V1: Simple, Medium, and Complex. Building these blueprints was a "hot subject" between my technical partner and me, as we wanted that even non-data professionals would understand.

These choices were about:

"How many components do you need to build a simple/medium/complex modern data stack?"

This question was evident in our heads but not in our user's heads—maybe too much architectural "thinking" from our side.

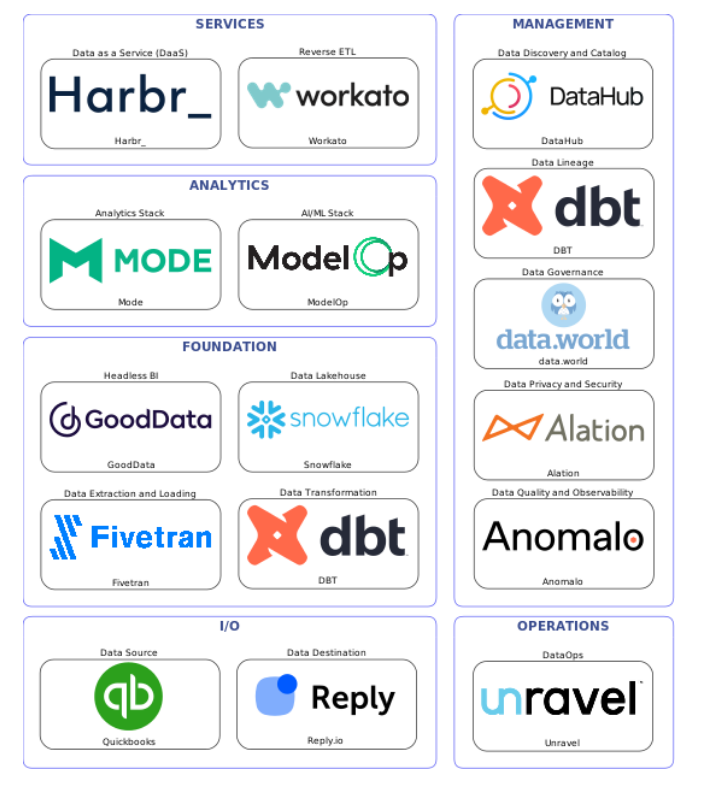

In the end, the Simple, Medium, Complex choices were misleading for some of the users, based on their remarks, so we chose for V2 to have one conceptual architecture blueprint for a complete modern data stack. This new blueprint targets data professionals to use it to inspire their work.

The data solution blueprint is split into six zones or "Layers" as we call them in the architectural language:

-

I/O

- Data Source

- Data Destination

-

Foundation

- Data Lakehouse

- Data Extraction and Loading

- Data Transformation

- HeadlessBI

-

Analytics

- Analytics Stack

- AI/ML Stack

-

Services

- Data as a Service

- Reverse ETL

-

Management

- Data Discovery and Catalog

- Data Lineage

- Data Governance

- Data Privacy and Security

- Data Quality and Observability

-

Operations

- DataOps

Note: I'm preparing a new article describing each component in detail and the architectural patterns that can be implemented and served by such a blueprint, so please subscribe and follow me for future updates.

Each component represents a tool or a vendor. Sometimes the tool is similar to the vendor, but we added them in multiple categories for vendors with a suite of tools (e.g., Collibra). We got requests from users to add the product name, but this means a lot of effort to maintain this information.

The market for modern data stack products is very dynamic. We see new tools pop up each month, vendors who add new capabilities (especially in the Data Management and Operations Layers), M&A activities, and rebranding (e.g., GoodData); so we are trying to keep up with all these changes on the site as much as we can.

"But Adrian, where are the arrows?"

In V2, we first decided to remove the arrows because some users found them misleading, especially in the V1 medium and complex blueprints. Second, we decided to go with a complete modern data stack blueprint, and the arrows will add more complexity to the design.

The modern data stack blueprint goes beyond the point-to-point integration we know from the "BI old days" – the classical:

Data Source → Extract, Load, and Transform → Data Warehouse → BI tool

Nowadays, using Data Transformation and Analytical tools, we have a write-back data pipeline to the Data Lakehouse. If we use a Reverse ETL tool that gets data from the Data Lakehouse and writes it back to the Data Destination, which can feed the Data Lakehouse again, it's a closed data pipeline loop.

The arrows will make sense when I describe the architectural patterns closer to the solution architecture than the conceptual architecture, which is the main scope of the site today.

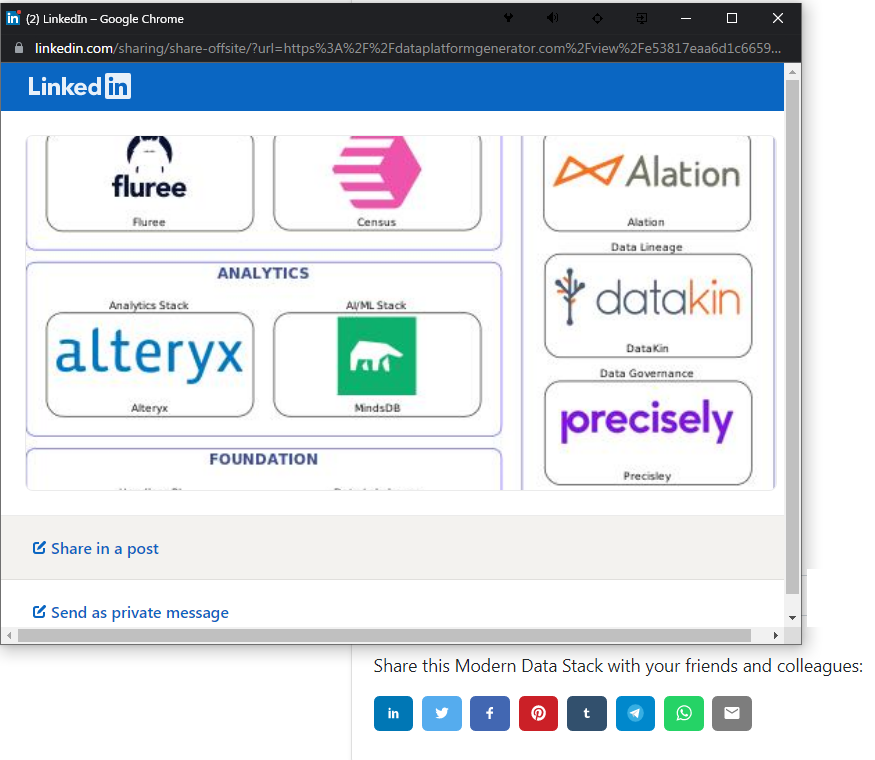

Social Media sharing

We had the Social Media buttons in V1, but we couldn't share the individual data solution blueprints, so in V2, we managed to implement this function. We have a huge marketing budget of 0 EUR/0 USD to promote our site on social media channels and other data-specialized web platforms. We are counting on our users who like the site and the idea to share it with their relatives, friends, colleagues, and partners. It's beyond our scope to become viral or famous, but who knows? Maybe we will get some traction if the Kardashian clan enters the modern data stack market.

You can share on LinkedIn, Twitter, Facebook, Pinterest, Tumblr, Telegram, Whatsup, Email and SMS (on mobile only).

F.A.Q

Some of the questions and answers we exchanged with our users make sense to be part of a FAQ section that may end up as a dedicated page on our site. So far, the most exciting topics are:

Why are there no/few on-premise tools?

Modern Data Stack was born in the cloud, so it makes sense to have cloud-only tools. Some tools from the site can be deployed on-premise, but our focus is cloud deployments.

Why are there no Azure/AWS/GCP tools?

We decided not to include these tools, as each cloud vendor provider (CVP) has its data reference architecture (blueprints) – which change frequently, and we don't want to reinvent the wheel.

Another reason is that we wanted to present tools that are not cloud-lock, vendor-lock, and data-lock as much as possible. There are some databases like PostgreSQL, MariaDB, and MySQL on different cloud provider offerings, as data sources.

Why are there no Redshift and BigQuery as Data Lakehouses?

Both databases are part of the modern data stack "movement" but are cloud-lock tools. Redshift is part of the AWS offering ONLY, and it's going serverless… or something like that; BigQuery is part of the GCP offering ONLY and is making extensive efforts in becoming an open data lakehouse.

Why are there no traditional databases like SQL Server, Oracle, IBM DB2? These are not data lakehouses?

First, these are databases that everyone knows, even the non-IT people. Our goal with the site is to present new tools from the modern data stack wave, which very few people know, and make them visible via the data solution blueprints. All these databases are still a good option for Data Warehouse, but they need a different architecture to become Data Lakehouses, which probably will not happen.

Why is PostgreSQL a Data Lakehouse?

PostgreSQL is a popular database, especially when you are a startup with a limited budget and a very reliable analytical data model when you design it like that. To claim PostgreSQL is a "data lakehouse" is "wishful thinking" from our side. We are strong supporters of the project, and who knows what it will become in a few years?

Why there is no Tableau, PowerBI, Qlik tools?

First, everyone knows about them, especially business people. Second, these tools were initially born as user-desktop tools (DOLAP tools), so the accent is on the user, not on the team; the collaborative aspects happen only in the server implementation, and it's not great. Third, they come with a proprietary data model, and if you need to port this to another analytical tool, you end up creating it from scratch. The Headless BI tools will solve this problem by providing a unified semantic model which multiple analytical tools can use.

Future

So, when the V3 is released?

Officially, the V3 is postponed.

Don't worry. We have the backlog, and we will still add user stories when you come up with improvement suggestions.

We are currently working on our first open-source modern data stack.

We are taking the concept of the data solution blueprint from a "Picture" to a "Script" where you can deploy an entire modern data stack in minutes in your cloud provider of choice, using Cloud-Native technologies, Everything as Code and DevOps best practices.